The Pattern

Before it's obvious. A daily AI culture intelligence product. 140+ sources synthesised by Claude Sonnet, narrated in my cloned voice, deployed automatically every morning. 14 scripts, ~9,700 lines of code, zero team.

Visit thepattern.media

Culture moves faster than anyone can read

I spent fifteen years in advertising trying to stay on top of culture. You know the drill: fifty tabs open, three newsletters half-read, a podcast queue you'll never finish, and that nagging feeling you've missed the thing everyone's already talking about. The problem isn't access to information. It's synthesis. Anyone can skim headlines. The hard part is connecting them, seeing the pattern underneath. That's what a good strategist does in a meeting, but nobody was doing it daily, automatically, at scale. So I built it.

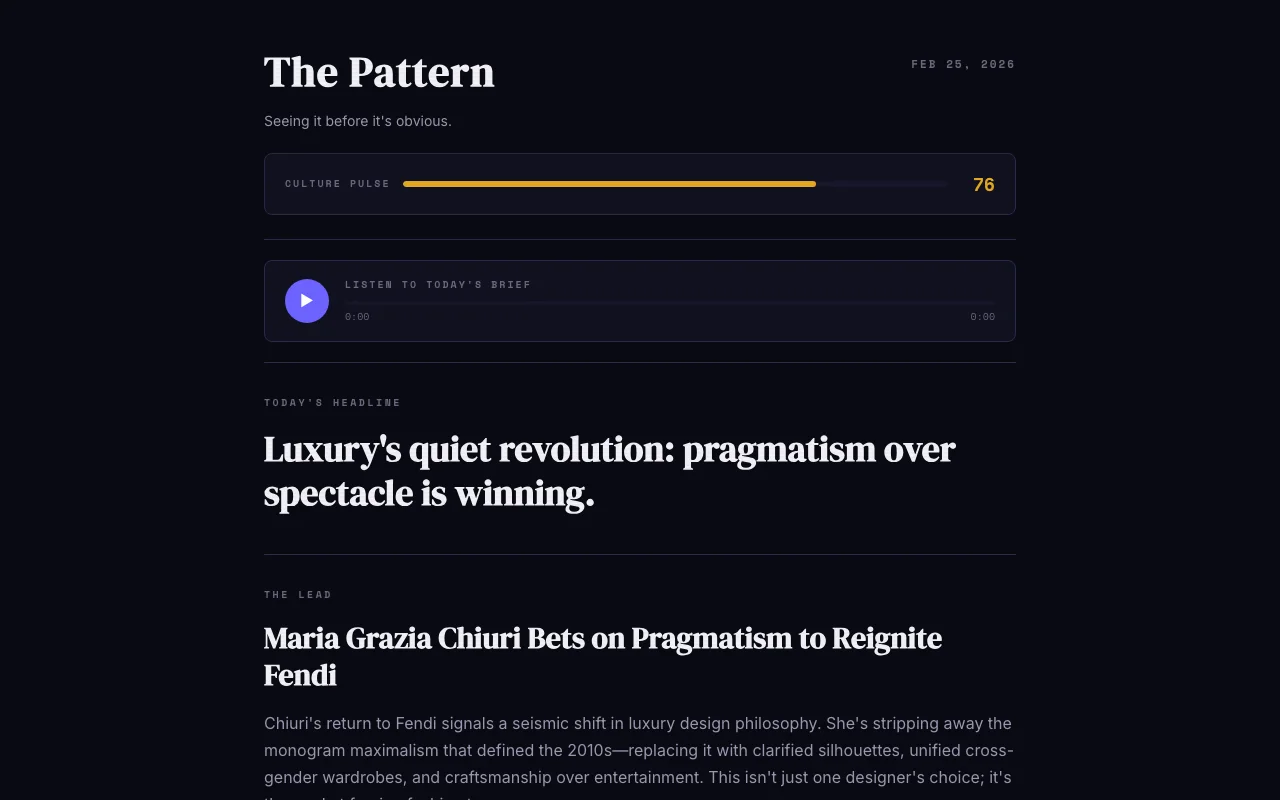

The Pattern is a daily culture intelligence product. Every morning at 7am, it scans 140+ sources across fashion, design, tech, brands, music, art, and lifestyle, finds the through-lines, and tells you what matters before the rest of the industry catches up. Not a link dump. Not a summary. An editorial perspective on the day's culture, generated by AI but shaped entirely by my curatorial instincts.

Fetch, synthesise, narrate, predict, deploy

The pipeline starts with CultureTerminal, my culture news aggregator that scores 140+ RSS feeds across eight culture domains. Every morning at 7am UTC, a GitHub Actions workflow kicks off. It fetches the top-scoring articles, then hands everything to Claude Sonnet for synthesis. Not summarisation. Synthesis. The AI reads across all of them and finds the connections: the pattern.

The output is a full editorial product: a Culture Pulse score (0-100 for how much is happening), The Lead (the biggest story with context), 5 Signals (punchy one-line observations across categories), The Pattern (the hidden connection across today's coverage), predictions with confidence scores, conversation starters, and a quote of the day. Then it generates audio in my cloned voice via ElevenLabs, creates PNG social cards, updates the prediction ledger, detects cultural arbitrage, builds 13+ HTML pages, and deploys to Netlify. By the time I wake up, the day's briefing is live.

"I wanted to build the thing I wished existed: a daily culture briefing that doesn't just tell you what happened, but connects the dots across everything and tells you why it matters. And I wanted it to sound like me."

What gets generated every morning

The daily brief is the core: Culture Pulse, headline, lead story, five signals, the pattern, predictions, conversation starters, and a quote. But the product goes much deeper than a single page.

A prediction ledger tracks every bold claim with a timeframe, confidence score, and running hit rate. When predictions expire, they're marked hit or miss. It creates accountability and compounding credibility.

Cultural arbitrage detection spots when a brand is covered very differently across categories. The gap between how fashion talks about a brand versus how tech talks about it reveals something neither side sees alone.

Trend memory tracks recurring signals across days. If the same theme appears three times, it gets flagged. First-spotted timestamps record when The Pattern covered something before it went mainstream.

An interactive dashboard shows category velocity, signal density, and pattern evolution over time. Think Bloomberg Terminal for culture.

Engagement mechanics include reading streaks, weekly progress, reaction buttons, and a visitor counter that nudges towards subscribing. All client-side, no database needed.

Why it works the way it works

AI synthesis, not just aggregation. CultureTerminal already aggregates. I didn't need another list of links. What I needed was the bit that happens in a strategist's head: reading five separate articles and realising they're all pointing at the same shift. That's what Claude Sonnet does here. The prompt is heavily engineered with examples of good versus bad output. It doesn't ask the AI to summarise. It asks it to find what connects. The synthesis is the product.

Why audio matters. Half the people I know consume their morning briefing while commuting or getting ready. Text-only means you have to sit down and read. Audio means The Pattern fits into existing habits. A custom-built player with a circular progress ring, live chapter strip, frequency visualiser, transcript overlay, and position memory across sessions. It looks and feels like a product, not a widget.

Why daily cadence. Weekly roundups are too slow. Culture doesn't wait for Friday. But hourly updates would be noise. Daily is the sweet spot: enough time for real patterns to emerge, frequent enough that you're never more than 24 hours behind. The 7am slot is deliberate: ready before the working day starts.

Why my cloned voice. If The Pattern is my editorial product, it should sound like me. A generic AI voice makes it feel like a tool. My voice makes it feel like a person with a point of view. The 30 minutes of training data was enough for ElevenLabs to capture the cadence and tone. It's uncanny, and people always ask about it.

Why the tone. The copy is confident and insider. Section labels like "SIGNALS", "KEEP TABS", "TALK ABOUT THIS". The footer says "Built solo. Powered by obsession." It assumes the reader is smart enough to get it. That's a deliberate editorial choice, not an accident.

Lessons from automating editorial judgement

The best AI products are opinionated. The temptation with AI-generated content is to make it neutral, balanced, safe. But that's not what good editorial does. Good editorial has a point of view. The Pattern works because the prompts are opinionated. They don't ask Claude to summarise. They ask it to find what's interesting, what's surprising, what connects. The opinion is baked into the system design, not left to chance.

Automation is a creative medium. People hear "automated pipeline" and think boring infrastructure. But choosing what to automate and how to shape the output is a creative decision. The editorial structure, the tone of voice, the daily cadence, the cloned audio. Those are all creative choices. The automation just means they happen at scale, every single day, without me lifting a finger. That's not removing the creativity. It's encoding it.

Taste becomes the only differentiator. When the AI does the making, all that's left is your judgement. Which sources to include. How to score them. What the brief should sound like. What the design should feel like. The code is the mechanism. The taste is the product. That's the lesson that applies to everything I build.

"Every morning at 7am, a system I built reads 140+ culture sources, writes an editorial briefing, narrates it in my voice, generates social cards, updates a prediction ledger, and publishes it to the internet. I'm asleep when it happens."

Read today's culture intelligence briefing. New edition every morning at 7am.

Visit The Pattern